用C#實現簡單的線性回歸

前言

最近注意到了NumSharp,想學習一下,最好的學習方式就是去實踐,因此從github上找了一個用python實現的簡單線性回歸代碼,然后基于NumSharp用C#進行了改寫。

NumSharp簡介

NumSharp(NumPy for C#)是一個在C#中實現的多維數組操作庫,它的設計受到了Python中的NumPy庫的啟發。NumSharp提供了類似于NumPy的數組對象,以及對這些數組進行操作的豐富功能。它是一個開源項目,旨在為C#開發者提供在科學計算、數據分析和機器學習等領域進行高效數組處理的工具。

python代碼

用到的python代碼來源:

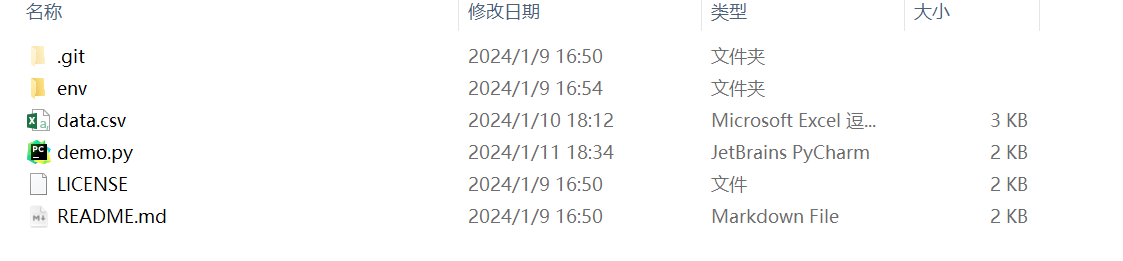

下載到本地之后,如下圖所示:

python代碼如下所示:

#The optimal values of m and b can be actually calculated with way less effort than doing a linear regression.

#this is just to demonstrate gradient descent

?

from numpy import *

?

# y = mx + b

# m is slope, b is y-intercept

def compute_error_for_line_given_points(b, m, points):

totalError = 0

for i in range(0, len(points)):

x = points[i, 0]

y = points[i, 1]

totalError += (y - (m * x + b)) ** 2

return totalError / float(len(points))

?

def step_gradient(b_current, m_current, points, learningRate):

b_gradient = 0

m_gradient = 0

N = float(len(points))

for i in range(0, len(points)):

x = points[i, 0]

y = points[i, 1]

b_gradient += -(2/N) * (y - ((m_current * x) + b_current))

m_gradient += -(2/N) * x * (y - ((m_current * x) + b_current))

new_b = b_current - (learningRate * b_gradient)

new_m = m_current - (learningRate * m_gradient)

return [new_b, new_m]

?

def gradient_descent_runner(points, starting_b, starting_m, learning_rate, num_iterations):

b = starting_b

m = starting_m

for i in range(num_iterations):

b, m = step_gradient(b, m, array(points), learning_rate)

return [b, m]

?

def run():

points = genfromtxt("data.csv", delimiter=",")

learning_rate = 0.0001

initial_b = 0 # initial y-intercept guess

initial_m = 0 # initial slope guess

num_iterations = 1000

print ("Starting gradient descent at b = {0}, m = {1}, error = {2}".format(initial_b, initial_m, compute_error_for_line_given_points(initial_b, initial_m, points)))

print ("Running...")

[b, m] = gradient_descent_runner(points, initial_b, initial_m, learning_rate, num_iterations)

print ("After {0} iterations b = {1}, m = {2}, error = {3}".format(num_iterations, b, m, compute_error_for_line_given_points(b, m, points)))

?